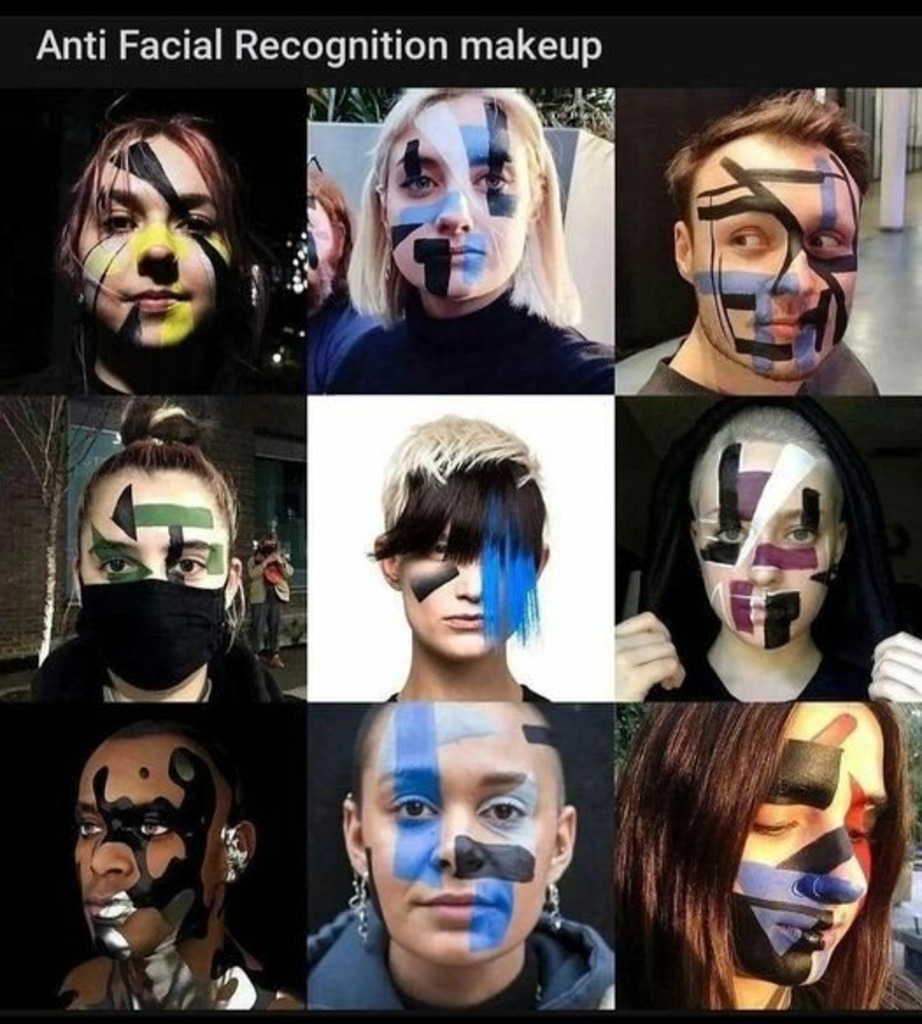

Amazon has registered 17 new patents for biometric technology intended to help its doorbell cameras identify “suspicious” people by scent, skin texture, fingerprints, eyes, voice, and gait.

The tech giant has been developing its doorbell security camera system since 2018, when Amazon acquired the firm named Ring and, with it, the original technology. According to media reports, Jeff Bezos’ company is now preparing to enable the devices to identify “suspicious” people with the help of biometric technology, based on skin texture, gait, finger, voice, retina, iris, and even odor.

On top of that, if Amazon’s new patents are anything to go by, all Ring doorbell cameras in a given neighborhood would be interconnected, sharing data with each other and creating a composite image of “suspicious” individuals.

One of the patents for what is described in the media as a “neighborhood alert mode” would allow users in one household to send photos and videos of someone they deem ‘suspicious’ to their neighbors’ Ring cameras so that they, too, start recording and can assemble a “series of ‘storyboard’ images for activity taking place across the fields of view of multiple cameras.”

Aside from the possible future interconnectivity among the Ring devices themselves, Amazon’s doorbell cameras, as it stands now, already exchange information with 1,963 police and 383 fire departments across the US, according to Business Insider. Authorities do not even need a warrant to access Ring footage.

Keep reading

You must be logged in to post a comment.